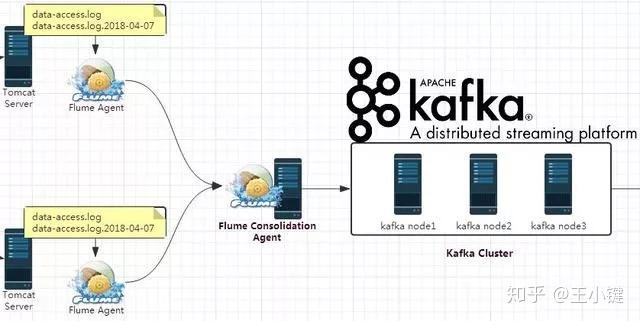

Use credentials from the VCAP_SERVICES environment variable in the Message Hub instance. Create a file named nf with the following content.From the Ambari web interface, click the Flume “Configs” tab, expand “Advanced flume-env”, and append the following line: export FLUME_CLASSPATH=$FLUME_CLASSPATH:/home/biadmin/messagehub.login-1.0.0.jar Add messagehub.login-1.0.0.jar to the Flume class path.It comes with many built-in sources, channels, and sinks, for example, Kafka. Run the following command to make this JAR file readable. Flume is a distributed system that can be used to collect, aggregate, and transfer streaming events into Hadoop.Download messagehub.login-1.0.0.jar from the Flume host.The following steps are taken by the BigInsights on Cloud biadmin user. Running Flume agents from the Ambari web interface If you are using BigInsights 4.2 on Cloud, Flume installed on a data node can also connect to Message Hub, because data nodes use management nodes as the gateway in BigInsights on Cloud.If your IBM BigInsights cluster is an “on premises” setup, install Flume on the edge node, which can span across private and public networks.Ensure that the Flume host can connect to Message Hub brokers.

Create a Message Hub instance on Bluemix ( ).Can it connect to Message Hub? The answer is “yes”, and this article shows you how to use Flume in IBM Open Platform (IOP) 4.2 with Message Hub. Flume 1.6.0 can support a Kafka source, sink, and channel. IBM Message Hub is a service on Bluemix that is based upon Apache Kafka. Questions or issues encountered should be discussed on the BigInsights StackOverflow forum or the appropriate Apache Software Foundation mailing list for the component(s) covered by this article. Pro Hadoop integration Flume was created to efficiently move log data to Apache Hadoops HDFS. Product support for this software will not be provided (including upgrade support for either IOP or the software described). Flume is transactional (no lost when duplicating streams), and can be backuped by Kafka Top Con Hard to manage Since Flume cannot do multiplex connections, its extremely hard to manage. These directions, and any binaries that may be provided as part of this article (either hosted by IBM or otherwise), are provided for convenience and make no guarantees as to stability, performance, or functionality of the software being installed. The plugin provides a way for users to send data from Apache Flume to Kafka bypassing a Flume Receiver. The following directions detail the manual installation of software into IBM Open Platform for Apache Hadoop.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed